Confident, Wrong, Unaware: Why AI Can Produce Errors But Cannot Experience Being Wrong

Clarity Is the Product — Series 10 · Technology | Lumen8 MediaBy Michelle Lanier, Creative Director & Founder, Lumen8 Media

If you're joining mid-series: The Foundation posts established why video should function as educational infrastructure and how Stripe, Twilio, MongoDB, and AWS built it. Series 01 (The Mystery Machine) explained the cognitive science behind curiosity-driven content: why uncertainty, not information, is what activates deep learning. Series 02 (The Valley Is the Feature) introduced the Dunning-Kruger narrative arc and the neuroscience of failure-first storytelling. Series 09 (The Seat Is Empty) named the gap in B2B content: the executive buyer in complex technology still isn't getting educational content built on these mechanisms. This post is about why AI, despite the scale of its output, cannot fill that seat.

The flood arrived faster than anyone predicted.

In 2023, 60% of consumers said they preferred AI-generated creator content to traditional creator content. By January 2026, that number had collapsed to 26%.[1] More than 20% of videos surfaced to new YouTube users now qualify as what critics have taken to calling "AI slop."[2] Americans believe only 41% of online content is accurate, factual, and made by humans. Three-quarters say their trust in the internet is at an all-time low.[3]

The backlash has a texture to it. Brands are requesting imperfections in creator deals (unwashed dishes, unmade beds, hair that isn't perfect) because overly polished content now reads as machine-made.[1] Audiences, in the words of one talent manager, "walk away feeling ungratified" after consuming AI-generated content.[1] The CMO of Billion Dollar Boy put it directly: "AI can't replicate the messiness of human creativity."

The question most marketing leaders are asking in response is the wrong one.

They're asking: Is AI content good enough?

The right question is: Can AI replicate the cognitive mechanisms that make educational content work in the first place?

The answer is structural, not qualitative. Every B2B company trying to build educational content that moves a buyer from confusion to confidence will feel this distinction directly.

What Educational Content Actually Requires

This series has spent five posts establishing the cognitive architecture behind content that builds genuine understanding.

Series 01 established that curiosity isn't an emotion. It's a cognitive state produced by a specific gap between what someone knows and what they want to know.[4] The brain's reward system activates in anticipation of closing that gap, preparing the hippocampus for enhanced memory encoding. Educational content that opens with a question the audience is already asking doesn't just feel more engaging. It physiologically primes the brain for better retention.

Series 02 established that the most durable learning happens on the other side of a specific arc: the Dunning-Kruger curve. The protagonist enters at the Peak of Mount Stupid: high confidence, low competence. Something goes wrong. Confidence collapses in the Valley of Despair. Through genuine trial and error, real understanding emerges on the Slope of Enlightenment. What makes this arc useful for educational content is that it maps onto how every audience member has actually learned anything hard. And when the brain watches a story that matches its own experience, mirror neurons fire, not just in language processing areas, but in the higher networks responsible for conceptual understanding and emotional response.[5]

That's the mechanism. Curiosity activates the brain for learning. The failure arc earns the authority to teach. Mirror neurons transfer the protagonist's understanding to the audience's own neural architecture.

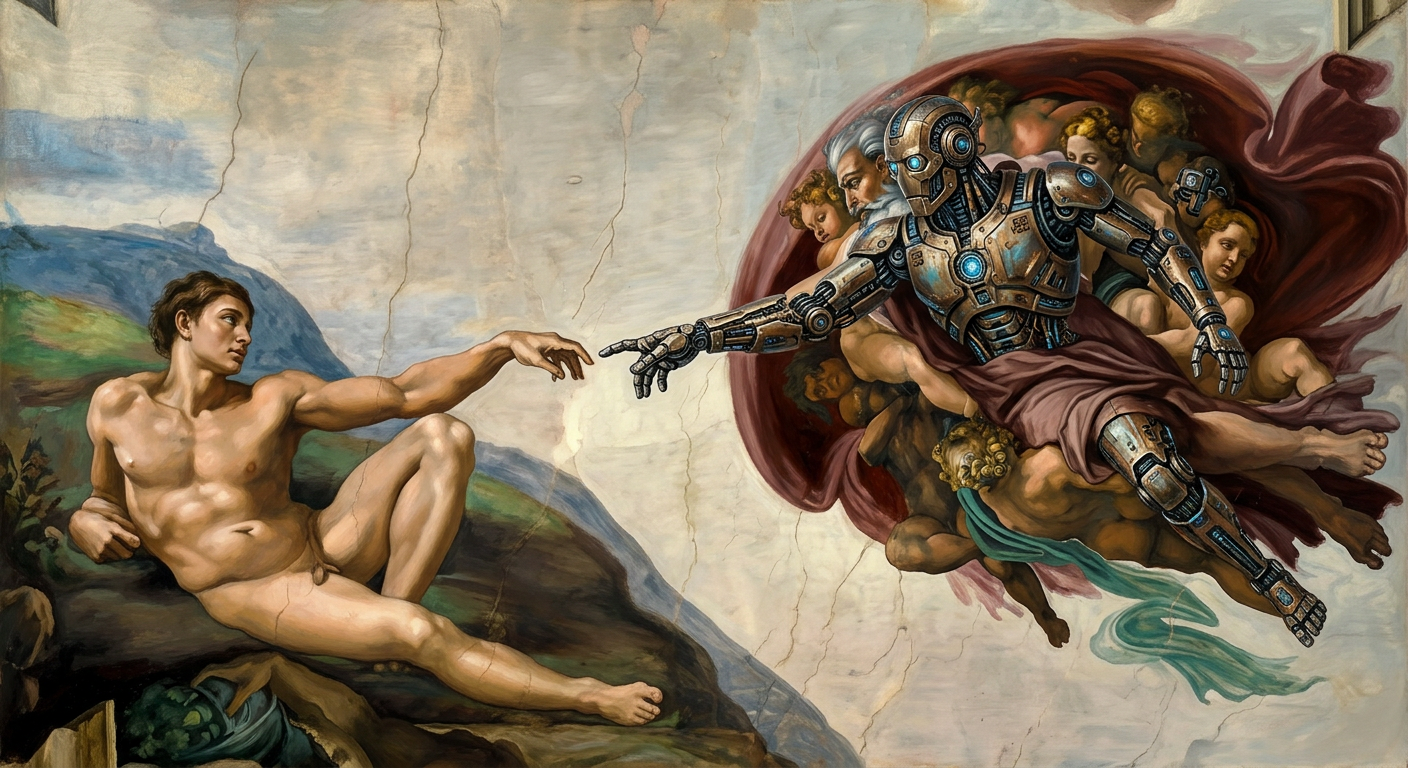

Now ask the question plainly: Can AI replicate any of this?

The Structural Problem

AI has been trained on an extraordinary volume of human writing about confusion, failure, and recovery. It can produce statistically plausible descriptions of what it feels like to misunderstand something, get it wrong, and gradually work through to clarity. In terms of surface-level output, these descriptions can be indistinguishable from human-authored ones.

But AI has never been confused.

It has never been at the Peak of Mount Stupid, certain it understood something it didn't. It has never experienced the specific collapse of confidence that happens when a mental model fails against reality. It has never iterated through the slow, humbling process of building genuine competence from the wreckage of a wrong assumption.

The authority that comes from "I tried this and it cost us three months and here's what we learned" is not replicable by a system that has never tried anything. The curiosity that comes from genuinely not knowing something, from a specific person encountering a specific problem they don't yet have the tools to solve, cannot be produced by a system that has access to the statistical average of all human curiosity at once.

Average curiosity is curiosity about nothing in particular.

Research published in Nature's Communications Psychology in 2026 found that AI's influence on scientific research is producing a monoculture: topical and methodological convergence that flattens intellectual imagination and crowds out the pluralism needed to keep knowledge adaptive.[6] The same dynamic operates in content. AI produces the center of mass of human thought on any given topic. It produces what the average informed person would say about curiosity, or failure, or learning. What it cannot produce is what a specific person discovered by being wrong in a way no one else has been wrong in exactly that way.

Carnegie Mellon mathematician Po-Shen Loh, in a lecture series that has reached over a million viewers, names what AI steals first: not creativity, not execution, but taste — the trained capacity to recognize what's worth pursuing before you can explain why.[10] Taste only develops through problems you haven't solved yet and the failure that precedes the solution. A system that inherits the statistical average of all previous answers has no taste. It has a center of mass.

That specificity is what mirror neurons respond to. A protagonist's genuine confusion activates recognition in an audience because it is particular: this specific person, this specific misconception, this specific moment of collapse. The neuroscience literature is clear that the more tightly the listener's neural patterns align with the storyteller's, the more durable the transfer of understanding.[5] Statistical plausibility is not the same as particularity. And the brain, it turns out, knows the difference.

Why Audiences Feel It Before They Can Name It

The research on AI content fatigue consistently describes a subjective experience that precedes any conscious analysis. Audiences "walk away feeling ungratified." Content "feels hollow." Something is missing that viewers cannot immediately articulate.

What they're responding to is the absence of genuine epistemic experience.

A 2025 paper on epistemic authority in AI-mediated learning found that generative AI lacks the intent and epistemic awareness that would allow it to recognize, let alone communicate, the limits of its own understanding.[7] Unlike human experts, who have earned their authority through a documented history of being wrong and correcting course, AI systems present information with a fluency and confidence that is unearned. There is no history of failure behind the output. No visible scar tissue from the Valley.

This matters acutely in B2B educational content, where the buyer's entire job is to assess credibility. A VP of Product evaluating a $200,000 purchase is not just evaluating information. They are evaluating the source of that information. They are asking, often unconsciously: has this person actually been where I am? Have they failed at this? Do they know what failure here actually looks like from the inside?

Forty-six percent of consumers already trust a brand less when they discover AI was used for services they assumed came from humans.[8] Among knowledge workers, a 2025 CHI conference study found that generative AI use correlates with self-reported reductions in cognitive effort and critical thinking.[9] The audience that AI-heavy content produces is not a more capable audience. It is a less critically engaged one.

These are not favorable conditions for educational content that builds the buyer confidence required to close a complex deal.

The conversation is reaching beyond academic journals. A 26-minute video by creator kate cassidy synthesizing the MIT and Carnegie Mellon research on AI's effect on critical thinking has drawn over 14,000 views through organic search — viewers already feeling what the research describes, looking for language to name it.[11]

The Moat Is Being Built Right Now

AI content is frictionless to produce and frictionless to consume. That frictionlessness is not a feature for educational content. It is the problem. The cognitive research established in Series 01 and Series 02 is explicit: learning requires friction. Curiosity requires a gap. The Dunning-Kruger arc requires a valley. Mirror neurons require something real to recognize.

Content that removes all friction removes the mechanism of learning itself.

Stripe, Twilio, MongoDB, and AWS built compounding advantages not because their content was more polished, but because it was more particular. Real developers explaining real implementation decisions. Real failure modes narrated from the inside. Real questions answered by people who had genuinely not known the answer at some point.

That content compounds. Each piece builds on the credibility established by the last. The audience that watches a founder navigate a genuinely hard problem learns something they retain. They develop a relationship with the source of that understanding. They return.

AI content does not compound this way. It produces the same statistical average every time, at scale. There is no accumulation of earned authority. No history of visible thinking. No trail of scars.

In a market flooded with AI output, the organizations still building human educational content infrastructure are not just maintaining their existing audience relationships. They are separating from the field at a moment when the field has collectively decided to automate itself.

The seat identified in Series 09, the one nobody is filling for the executive buyer in complex technology, is not fillable by a system that has never been confused by a complex technology problem. That seat requires someone who has actually sat with the confusion, worked through it, and is willing to narrate the valley on camera. Capability is the wrong measure. Experience is the only one that counts.

Understanding requires having been wrong. And AI has never been wrong about anything it didn't know it was supposed to know.

Citations

[1] Mercante, Alyssa. "After an oversaturation of AI-generated content, creators' authenticity and 'messiness' are in high demand." Digiday, January 14, 2026. https://digiday.com/media/after-an-oversaturation-of-ai-generated-content-creators-authenticity-and-messiness-are-in-high-demand/

[2] Meltwater/Kapwing analysis cited in: TechRadar. "AI Slop won in 2025 — fingerprinting real content might be the answer in 2026." TechRadar, 2026. https://www.techradar.com/ai-platforms-assistants/ai-slop-won-in-2025-fingerprinting-real-content-might-be-the-answer-in-2026

[3] OODAloop. "If 90% of Online Content Will Be AI-Generated by 2026, We Forecast a Deeply Human Anti-Content Movement in Response." OODAloop, 2026. https://oodaloop.com/analysis/archive/if-90-of-online-content-will-be-ai-generated-by-2026-we-forecast-a-deeply-human-anti-content-movement-in-response/

[4] Muller, Derek. "Is Most Published Research Wrong?" Veritasium, YouTube. Referenced in Series 01 (The Mystery Machine). Research on curiosity and information gaps: Loewenstein, G. "The psychology of curiosity: A review and reinterpretation." Psychological Bulletin, 116(1), 1994.

[5] Hasson, Uri. Princeton Neuroscience Institute research on neural coupling in storytelling. Referenced in Series 02 (The Valley Is the Feature). See also: Rizzolatti, G., & Craighero, L. "The mirror-neuron system." Annual Review of Neuroscience, 27, 2004.

[6] Communications Psychology, Nature. "AI is turning research into a scientific monoculture." Nature, 2026. https://www.nature.com/articles/s44271-026-00428-5

[7] Frontiers in Education. "Epistemic authority and generative AI in learning spaces: rethinking knowledge in the algorithmic age." Frontiers in Education, 2025. https://www.frontiersin.org/journals/education/articles/10.3389/feduc.2025.1647687/full

[8] FAD SYNC. "AI-Generated Content vs. Human Expertise: Why Trust Matters More Than Ever in 2026." FAD SYNC, 2026. https://fadsync.com/blog/ai-generated-content-vs-human-expertise-why-trust-matters-more-than-ever-in-2026/

[9] ACM CHI Conference. "The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers." Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems. https://dl.acm.org/doi/10.1145/3706598.3713778

[10] Loh, Po-Shen. "The Only Trait for Success in the AI Era — How to Build It." EO, YouTube, September 2025. https://www.youtube.com/watch?v=xWYb7tImErI

[11] kate cassidy. "is AI making you dumb? | critical thinking crisis." YouTube, July 2025. https://www.youtube.com/watch?v=hihagLuMUg4

The Clarity Diagnostic is a structured 60-minute conversation that maps where your audience is losing understanding and what it's costing you in adoption, cycle length, and churn. Book a session at lumen8.media/clarity-diagnostic.

Michelle Lanier is the founder of Lumen8 Media, a narrative strategy and video systems consultancy helping organizations in complex domains translate what they do into content that actually builds understanding.